NASA live stream - Earth From Space LIVE Feed | Incredible ISS live stream of Earth from space1/14/2017 The USDA Forest Service is proposing widespread forest thinning on our public lands across the West in a misguided attempt to reduce the impact of drought, fire, and insects (see National Forest Restoration Projects, Sierra Nevada National Forest Land Management Plan Revisions, news articles). These logging schemes are the latest in a series of Forest Service attempts to chainsaw their way out of a perceived problem. However, forests in the western United States have evolved to naturally self-thin uncompetitive trees through forest fires, insects, or disease. Forest fires and other disturbances are natural elements of healthy, dynamic forest ecosystems, and have been for millennia. These processes cull the weak and make room for the continued growth and reproduction of stronger, climate-adapted trees. Remaining live trees are genetically adapted to survive the new climate conditions and their offspring are also more climate-adapted, resistant, and resilient than the trees that perished. Without genetic testing of every tree in the forest, indiscriminate thinning will remove many of the trees that are intrinsically the best-adapted to naturally survive drought, fire, and insects.

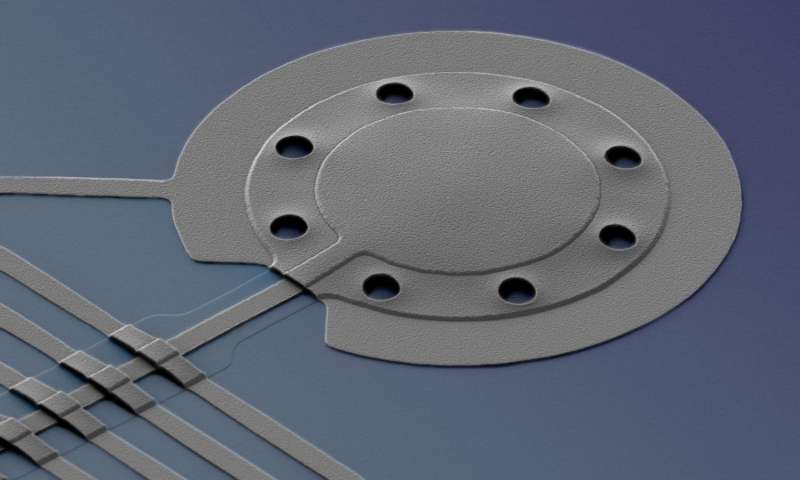

Recent studies have demonstrated that genetic variation is high within populations of forest trees, with especially high diversity found at the lower latitudes and altitudes that form the edges of a species’ distribution. Local genetic and epigenetic variation makes some individuals naturally more likely to survive drought, fire, and insect outbreaks. This is because ecotones, or transitional areas, are where each species experiences the most extreme climate conditions that it can survive, the lowest elevation and latitude boundary. These natural edges are where trees with the most resistant and resilient adaptations are found. It is also where significant mortality is to be expected as part of the process where the distribution of tree species shifts north and uphill in our warming climate. After forest fire or insect-caused mortality, green forest naturally regenerates without any need for expensive human interventions. Locally climate-adapted tree seedlings sprout and grow, and nitrogen-fixing shrubs and forbs replenish the soil and curb erosion. In the meantime, standing dead trees, snags, and logs provide critical food and shelter for many types of wildlife. Seedlings used in most Forest Service replanting efforts are bred for timber production, and although breeding programs are now looking for drought and temperature tolerance, there is a natural breeding program already underway that costs nothing and ensures the most locally adapted individuals will resist and persist as the climate warms. Weather and climate data are painting a clear picture of warmer, drier summers across most of the western United States. Forest fires are strongly correlated with the Palmer Drought Severity Index where drier years make bigger fires, so people living in fire-prone areas need to be prepared for wildfire as an inevitable occurrence, and take all precautions to protect their homes with defensible space and ember-stopping attic vent screens. Thinning the forest within a hundred yards from structures and some minor fireproof retrofitting are the only practices proven to protect homes and communities from wildfire. The West is getting drier than it was in the recent past, and that will require some adaptation, particularly in light of the significant recent human population growth in rural areas. We must also understand that recent fires are not unprecedented in size or severity as is often claimed by people who make money cutting our trees. The early 20th century sometimes saw 30 million acres of forest burn, and that was before widespread fire suppression, so fuel buildup is not the looming fire monster some folks who profit from logging have made it out to be. The world is an inherently dynamic and changeable environment, and generally the cost of fighting change is much more expensive than adapting to it. Studies referred to are: Kolb, T.E., Grady, K.C., McEttrick, M.P. and Herrero, A., 2016. Local-scale drought adaptation of ponderosa pine seedlings at habitat ecotones. Forest Science, 62(6), pp.641-651. Prunier, J., Verta, J.P. and MacKay, J.J., 2016. Conifer genomics and adaptation: at the crossroads of genetic diversity and genome function. New Phytologist, 209(1), pp.44-62. Pinnell, S., 2016. Resin duct defenses in ponderosa pine during a mountain pine beetle outbreak: genetic effects, mortality, and relationships with growth. PhD Thesis, University of Montana, Missoula.  NIST researchers applied a special form of microwave light to cool a microscopic aluminum drum to an energy level below the generally accepted limit, to just one fifth of a single quantum of energy. The drum, which is 20 micrometers in diameter and 100 nanometers thick, beat10 million times per second while its range of motion fell to nearly zero. Credit: Teufel/NIST Read more at: https://phys.org/news/2017-01-physicists-cool-microscopic-quantum-limit.html#jCp Physicists at the National Institute of Standards and Technology (NIST) have cooled a mechanical object to a temperature lower than previously thought possible, below the so-called "quantum limit."

The new NIST theory and experiments, described in the Jan. 12, 2017, issue of Nature, showed that a microscopic mechanical drum—a vibrating aluminum membrane—could be cooled to less than one-fifth of a single quantum, or packet of energy, lower than ordinarily predicted by quantum physics. The new technique theoretically could be used to cool objects to absolute zero, the temperature at which matter is devoid of nearly all energy and motion, NIST scientists said. "The colder you can get the drum, the better it is for any application," said NIST physicist John Teufel, who led the experiment. "Sensors would become more sensitive. You can store information longer. If you were using it in a quantum computer, then you would compute without distortion, and you would actually get the answer you want." "The results were a complete surprise to experts in the field," Teufel's group leader and co-author José Aumentado said. "It's a very elegant experiment that will certainly have a lot of impact." The drum, 20 micrometers in diameter and 100 nanometers thick, is embedded in a superconducting circuit designed so that the drum motion influences the microwaves bouncing inside a hollow enclosure known as an electromagnetic cavity. Microwaves are a form of electromagnetic radiation, so they are in effect a form of invisible light, with a longer wavelength and lower frequency than visible light. The microwave light inside the cavity changes its frequency as needed to match the frequency at which the cavity naturally resonates, or vibrates. This is the cavity's natural "tone," analogous to the musical pitch that a water-filled glass will sound when its rim is rubbed with a finger or its side is struck with a spoon. NIST scientists previously cooled the quantum drum to its lowest-energy "ground state," or one-third of one quantum. They used a technique called sideband cooling, which involves applying a microwave tone to the circuit at a frequency below the cavity's resonance. This tone drives electrical charge in the circuit to make the drum beat. The drumbeats generate light particles, or photons, which naturally match the higher resonance frequency of the cavity. These photons leak out of the cavity as it fills up. Each departing photon takes with it one mechanical unit of energy—one phonon—from the drum's motion. This is the same idea as laser cooling of individual atoms, first demonstrated at NIST in 1978 and now widely used in applications such atomic clocks. The latest NIST experiment adds a novel twist—the use of "squeezed light" to drive the drum circuit. Squeezing is a quantum mechanical concept in which noise, or unwanted fluctuations, is moved from a useful property of the light to another aspect that doesn't affect the experiment. These quantum fluctuations limit the lowest temperatures that can be reached with conventional cooling techniques. The NIST team used a special circuit to generate microwave photons that were purified or stripped of intensity fluctuations, which reduced inadvertent heating of the drum. "Noise gives random kicks or heating to the thing you're trying to cool," Teufel said. "We are squeezing the light at a 'magic' level—in a very specific direction and amount—to make perfectly correlated photons with more stable intensity. These photons are both fragile and powerful." The NIST theory and experiments indicate that squeezed light removes the generally accepted cooling limit, Teufel said. This includes objects that are large or operate at low frequencies, which are the most difficult to cool. The drum might be used in applications such as hybrid quantum computers combining both quantum and mechanical elements, Teufel said. A hot topic in physics research around the world, quantum computers could theoretically solve certain problems considered intractable today  U.S. government scientists frantically copying climate data they fear will disappear under the Trump administration may get extra time to safeguard the information, courtesy of a novel legal bid by the Sierra Club. The environmental group is turning to open records requests to protect the resources and keep them from being deleted or made inaccessible, beginning with information housed at the Environmental Protection Agency and the Department of Energy. On Thursday, the organization filed Freedom of Information Act requests asking those agencies to turn over a slew of records, including data on greenhouse gas emissions, traditional air pollution and power plants. The rationale is simple: Federal laws and regulations generally block government agencies from destroying files that are being considered for release. Even if the Sierra Club’s FOIA requests are later rejected, the record-seeking alone could prevent files from being zapped quickly. And if the records are released, they could be stored independently on non-government computer servers, accessible even if other versions go offline. “There’s a lot of concern about environmental data writ large,” said Michael Halpern, deputy director of the Center for Science and Democracy at the Union of Concerned Scientists. The federal government is a repository for reams of information used widely by city planners, local governments, businesses and researchers to guide decisions about the location of new facilities, considerations of new infrastructure and conclusions about how quickly coastlines are changing. Fears StokedTo be sure, President-elect Donald Trump hasn’t described plans to pull the plugs on government databases containing information on everything from Arctic ice to ocean temperatures. But fears were stoked by a questionnaire Trump transition advisers sent to the Energy Department in December, asking about the resources officials there use to do their jobs. Trump officials later said the memo wasn’t authorized. Concerns also have been raised because of Trump’s past description of climate change as a hoax: “The concept of global warming was created by and for the Chinese in order to make U.S. manufacturing non-competitive,” Trump said in a 2012 tweet. Some of Trump’s transition advisers and nominees to fill key cabinet roles, notably Oklahoma Attorney General Scott Pruitt, tapped to lead the EPA, have questioned the role of humans in the phenomenon. Canada’s Purge“When you have people leading transition teams who have built careers out of questioning climate change science and questioning if there’s some kind of grand conspiracy to defraud the public, there’s no guarantee they will be terribly sympathetic to keep that information,” said Halpern, of the union, a science advocacy group. The “nightmare bacteria” could fend off 26 different drugs If it sometimes seems like the idea of antibiotic resistance, though unsettling, is more theoretical than real, please read on.

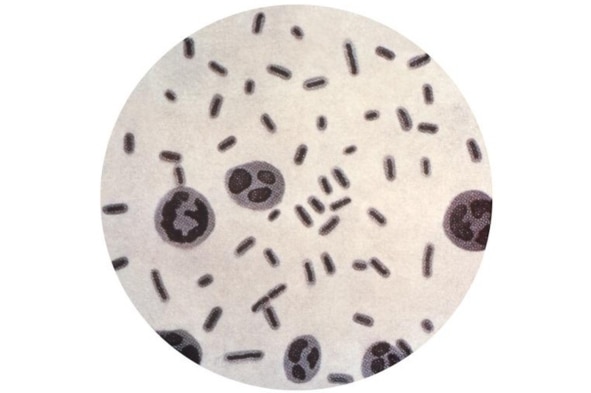

Public health officials from Nevada are reporting on a case of a woman who died in Reno in September from an incurable infection. Testing showed the superbug that had spread throughout her system could fend off 26 different antibiotics. “It was tested against everything that’s available in the United States … and was not effective,” said Dr. Alexander Kallen, a medical officer in the Centers for Disease Control and Prevention’s division of health care quality promotion. Although this isn’t the first time someone in the US has been infected with pan-resistant bacteria, at this point, it is not common. It is, however, alarming. “I think this is the harbinger of future badness to come,” said Dr. James Johnson, a professor of infectious diseases medicine at the University of Minnesota and a specialist at the Minnesota VA Medical Center. Other scientists are saying this case is yet another sign that researchers and governments need to take antibiotic resistance seriously. It was reported Thursday in Morbidity and Mortality Weekly Report, a journal published by the CDC. The authors of the report note this case underscores the need for hospitals to ask incoming patients about foreign travel and also about whether they had recently been hospitalized elsewhere. Almost three-quarters of Japan’s biggest coral reef has died, according to a report that blames its demise on rising sea temperatures caused by global warming.

The Japanese environment ministry said that 70% of the Sekisei lagoon in Okinawa had been killed by a phenomenon known as bleaching. Bleaching occurs when unusually warm water causes coral to expel the algae living in their tissues, causing the coral to turn completely white. Unless water temperatures quickly return to normal, the coral eventually dies from lack of nutrition. The plight of the reef, located in Japan’s southernmost reaches, has become “extremely serious” in recent years, according to the ministry, whose survey of 35 locations in the lagoon last November and December found that 70.1% percent of the coral had died. The dead coral has now turned dark brown and is now covered with algae, the Yomiuri Shimbun said. The newspaper said the average sea surface temperature between last June and August in the southern part of the Okinawa island chain was 30.1 degrees centigrade – or one to two degrees warmer than usual – and the highest average temperature since records began in 1982, according to the Japan meteorological agency. The ministry report follows warnings by the Coral Reef Watch programme at the US National Oceanic and Atmospheric Administration that global coral bleaching could become the “new normal” due to warming oceans. Experts said that bleaching had spread to about 90% of the Sekisei reef, a popular diving spot that covers 400sq km. A similar survey conducted in September and October last year found that just over 56% of the reef had died, indicating that bleaching has spread rapidly in recent months. |

ARCHIVES

January 2017

|